PLVS: An open-source SLAM system with Points, Lines, Volumetric mapping and 3D incremental Segmentation

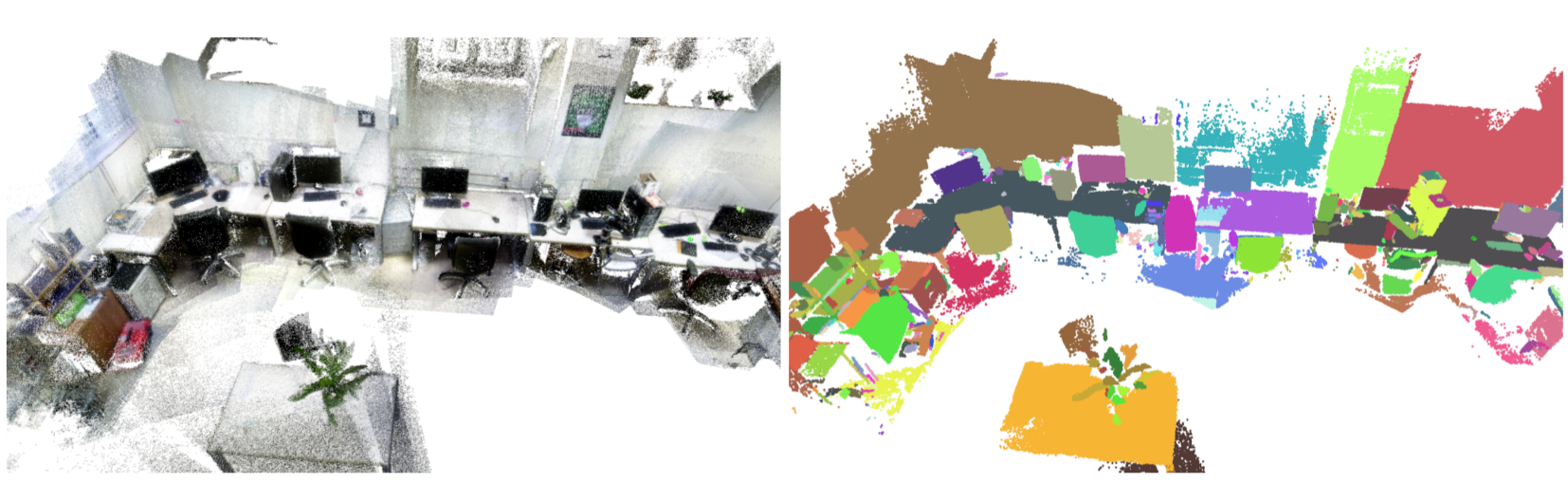

PLVS(*) is a real-time system that leverages sparse RGB-D and Stereo SLAM, volumetric mapping, and 3D unsupervised incremental segmentation. PLVS stands for Points, Lines, Volumetric mapping and Segmentation. The system runs entirely on CPU or can profit from available GPU computational resources for some specific tasks. The underlying SLAM module is sparse and keyframe-based. It relies on the extraction and tracking of keypoints and keylines. Different volumetric mapping methods are supported and integrated in PLVS. A novel “reprojection” error is proposed for bundle-adjusting line segments. This error allows the system to better stabilize the position estimates of the mapped line segment endpoints and improves SLAM performances. An incremental segmentation method is implemented and integrated into the PLVS framework. Additionally, maps can be saved and reused at need providing multi-session mapping capabilities.

(*) You can pronounce it /plʌs/ (in Latin it would be read as “PLUS”, ‘U’ and ‘V’ were allographs).

Code: github.com/luigifreda/plvs

Paper: PLVS: A SLAM System with Points, Lines, Volumetric Mapping, and 3D Incremental Segmentation

Luigi Freda

PLVS is a work in progress. New improvements are coming soon. I work on it during my free time. If you want to join the Team, feel free to get in touch: luigifreda[at] gmail[dot] com.

|

|

|

|

|

|

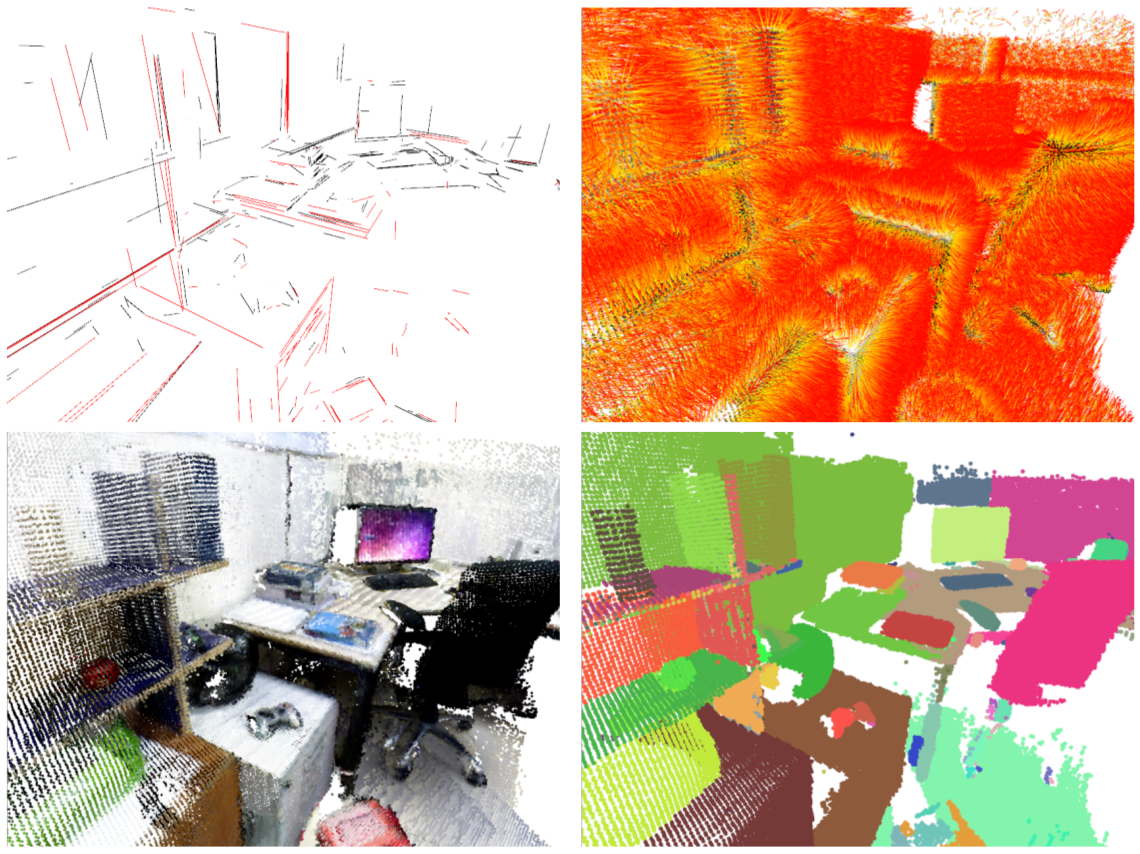

The following image shows some details of a 3D reconstruction: lines, normals, point cloud and segments.

RGB-D SLAM

Some of the following RGBD videos were shown at the last TRADR Review (Yr4). No GPU acceleration was used in their making. What you see are real-time screen recordings. A XTION camera was used for shooting the TRADR dataset.

Stereo SLAM

The following videos show PLVS working in real-time on the stereo datasets KITTI and EUROC. PLVS is able to chew stereo images without the need of pre-generated depths.

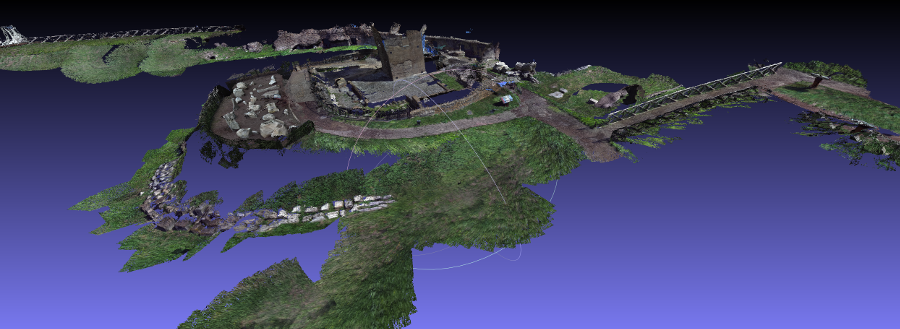

PLVS for Circus Maximus Mixed-Reality Experience

PLVS was used in order to reconstruct the Circus Maximus archaeological site and prepare the Circus Maximus AR/VR experiences. You can find further details about this process on this page.

Acknowledgments

I am testing and improving the PLVS framework on an NVIDIA Jetson TX2 board, which was kindly donated by NVIDIA Corporation. I work on it during my free time. The plan is to perform some rigorous testing on other new NVIDIA Jetson boards too ASAP. More details about the work and the obtained performances on the TX2 board and other NVIDIA Jetson boards will be reported in documents and posts ASAP.