ICLR 2016

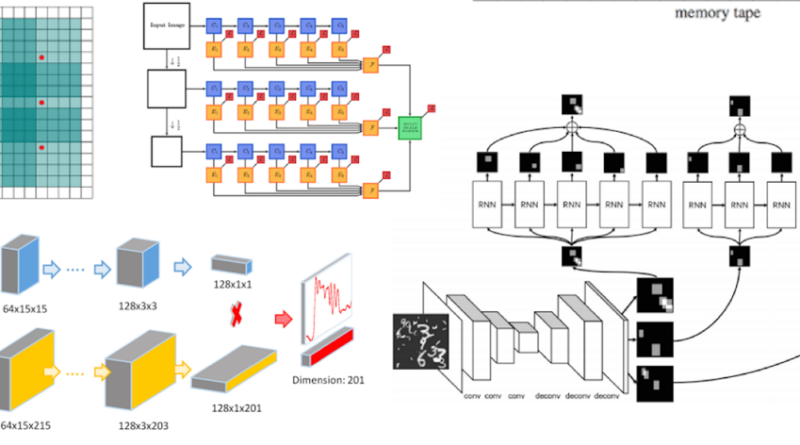

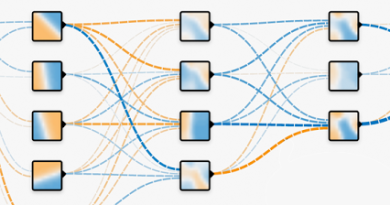

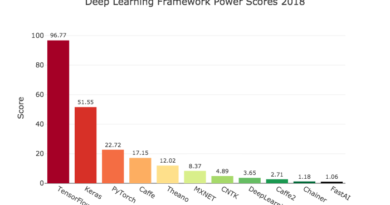

This interesting post of Tomasz Malisiewicz is about ICLR 2016. Here the Author highlights some new strategies for building deeper and more powerful neural networks, ideas for compressing big networks into smaller ones, as well as techniques for building “deep learning calculators.” The post is a summary of ICLR research trends and gives a taste of what’s possible on top of today’s Deep Learning stack.

Part I: ICLR vs CVPR

Part II: ICLR 2016 Deep Learning Trends

Part III: Quo Vadis Deep Learning?

See more here